For years, running deep learning models required powerful GPUs, cloud servers, and large amounts of memory. Today, that paradigm is changing. TinyML and embedded AI are enabling neural networks to run directly on microcontrollers consuming only milliwatts of power.

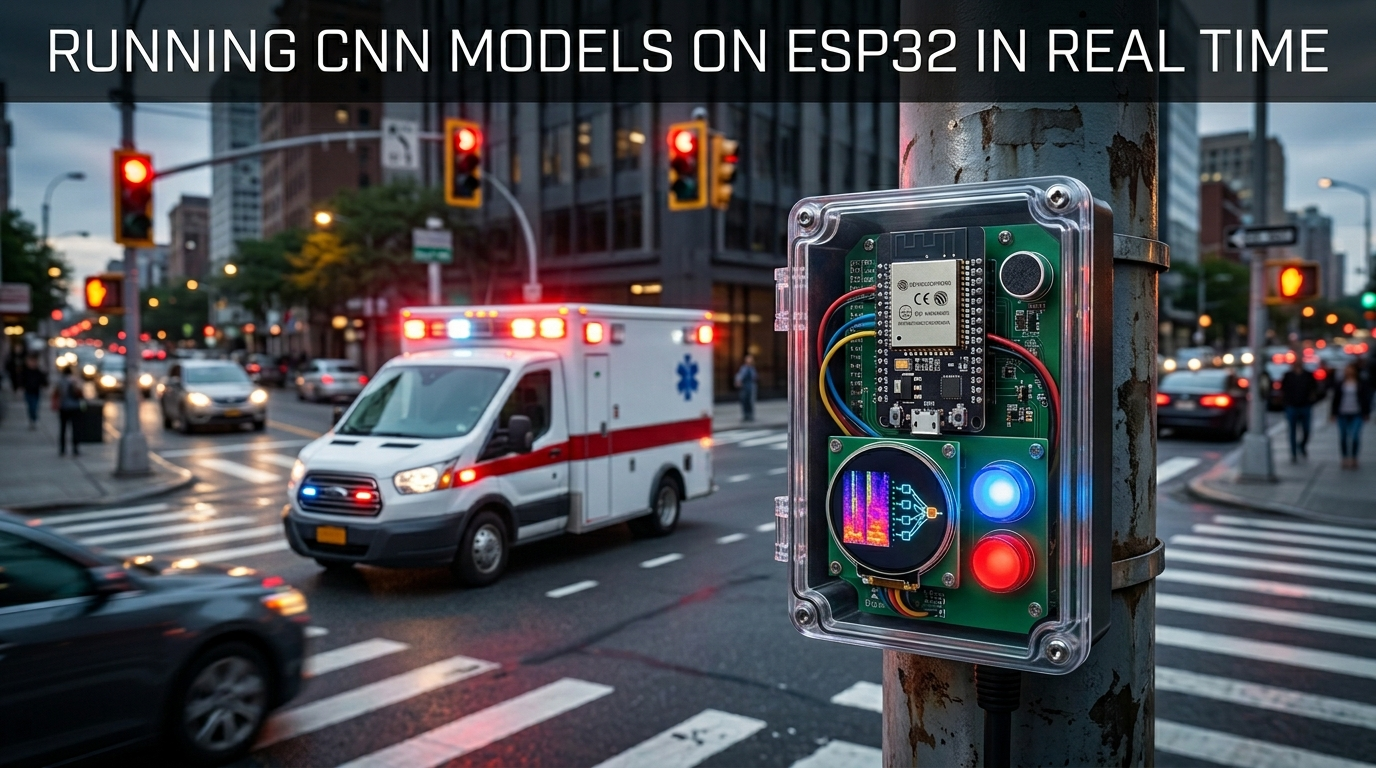

Among these devices, the ESP32 has emerged as one of the most accessible and powerful platforms for real-time edge intelligence. At Greenwave TechLabs, our emergency traffic preemption system uses a lightweight CNN running directly on an ESP32 microcontroller to detect ambulance sirens in real time.

How It Works

The model operates using MFCC (Mel-Frequency Cepstral Coefficient) audio features, which convert raw audio into compact frequency-domain representations inspired by human auditory perception. These features are then passed into a quantized convolutional neural network for siren classification.

Embedded Pipeline Performance

- 95.6% siren detection accuracy

- Approximately 120ms inference latency

- Real-time execution on ESP32 hardware

- Model size reduced to only 95KB after quantization

Why Edge Inference Matters

Running inference directly on low-cost embedded hardware drastically reduces cloud dependency, communication latency, bandwidth requirements, power consumption, and infrastructure costs. Edge inference also improves privacy and resilience — since data processing occurs locally, systems remain operational even during connectivity loss or network congestion.

According to TensorFlow Lite for Microcontrollers, TinyML enables machine learning inference on devices with as little as a few hundred kilobytes of memory.

Why ESP32

The ESP32 is particularly attractive for edge AI because it combines dual-core processing, integrated Wi-Fi and Bluetooth, low power consumption, affordable deployment cost, and a strong developer ecosystem. These characteristics make it ideal for smart infrastructure applications where scalability and low operational cost are critical.

In our architecture, the ESP32 continuously captures live audio through an I2S microphone, extracts MFCC features, runs CNN inference locally, and immediately forwards results to the traffic coordination system — entirely without cloud intervention.

The Future at the Edge

As smart cities evolve, embedded AI will become foundational infrastructure. Traffic systems, healthcare devices, industrial sensors, and public safety networks increasingly require intelligence that is fast, decentralized, low-power, scalable, and reliable. The future of AI is not only in data centers — it is also at the edge.