Deploying neural networks on embedded hardware introduces a difficult challenge: memory. Most convolutional neural networks are too large to run efficiently on microcontrollers like the ESP32. Even relatively lightweight models often consume several megabytes of storage and RAM, making real-time embedded inference impractical.

To solve this problem, Greenwave TechLabs developed a quantized CNN optimized specifically for siren detection on low-power edge hardware.

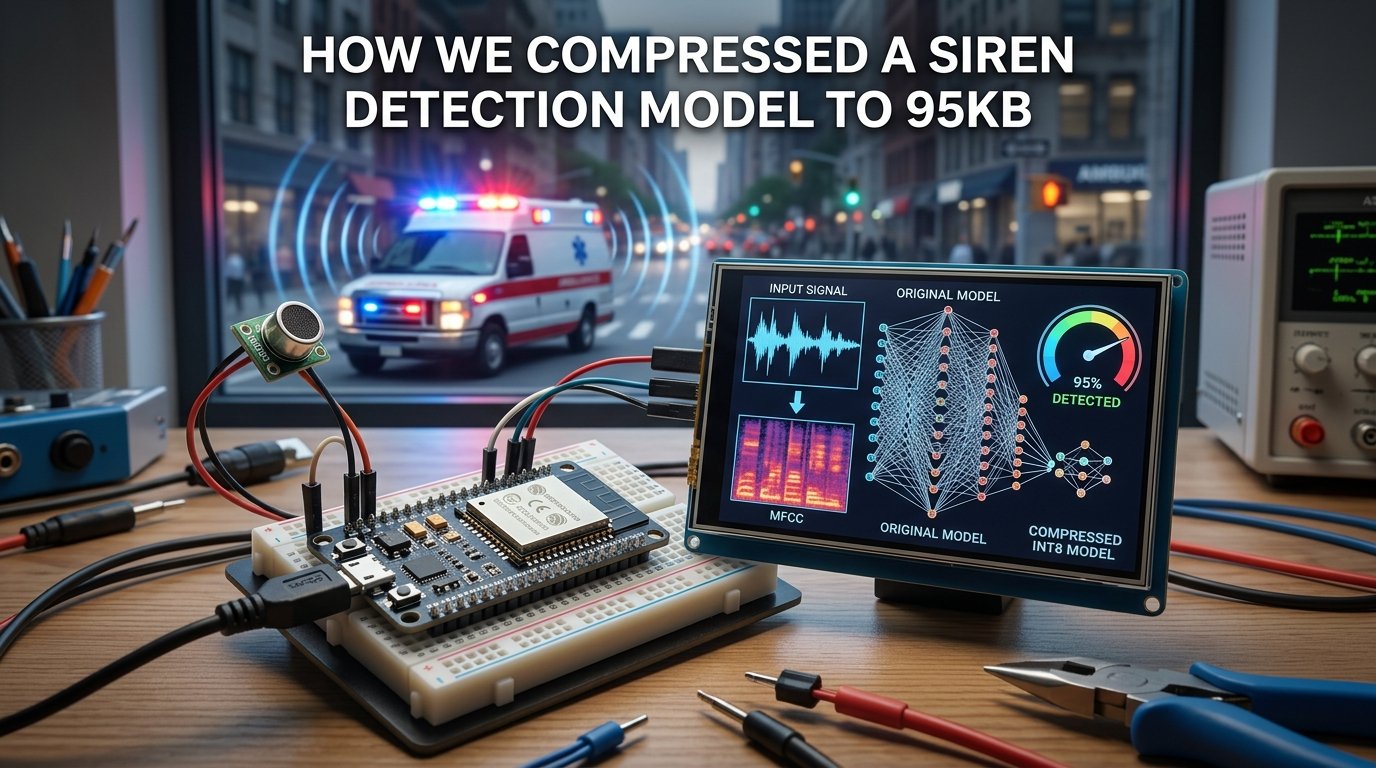

The Original Model

Our original acoustic model contained approximately 1.2 million parameters, around 1.2MB model size, and multiple convolutional and dense layers — including Conv2D layers, Max pooling, ReLU activation, Dense classification layers, and Sigmoid output for binary siren classification.

INT8 Quantization

To enable real-time inference, we applied INT8 quantization using TensorFlow Lite for Microcontrollers. Quantization converts floating-point weights into lower-precision integer representations, significantly reducing model size, memory usage, computational complexity, and inference latency.

After optimization, the final deployed model size was reduced from approximately 1.2MB to only 95KB — while still achieving 95.6% siren detection accuracy and 120ms average inference latency.

The Significance

According to TensorFlow Model Optimization Toolkit, quantization can reduce model sizes by up to 4x while improving inference efficiency on embedded devices. This compression ratio demonstrates one of the most important trends in modern AI: smaller models are becoming increasingly powerful.

Edge Inference Architecture

Our compressed CNN operates entirely on-device: audio is captured locally, MFCC features are extracted, CNN inference runs on ESP32, and detection results trigger signal coordination — all without requiring a cloud server.

- Faster response

- Improved reliability

- Lower deployment cost

- Greater scalability for smart cities

As embedded hardware continues to improve, edge AI systems will increasingly replace centralized cloud-only intelligence in real-time infrastructure environments.