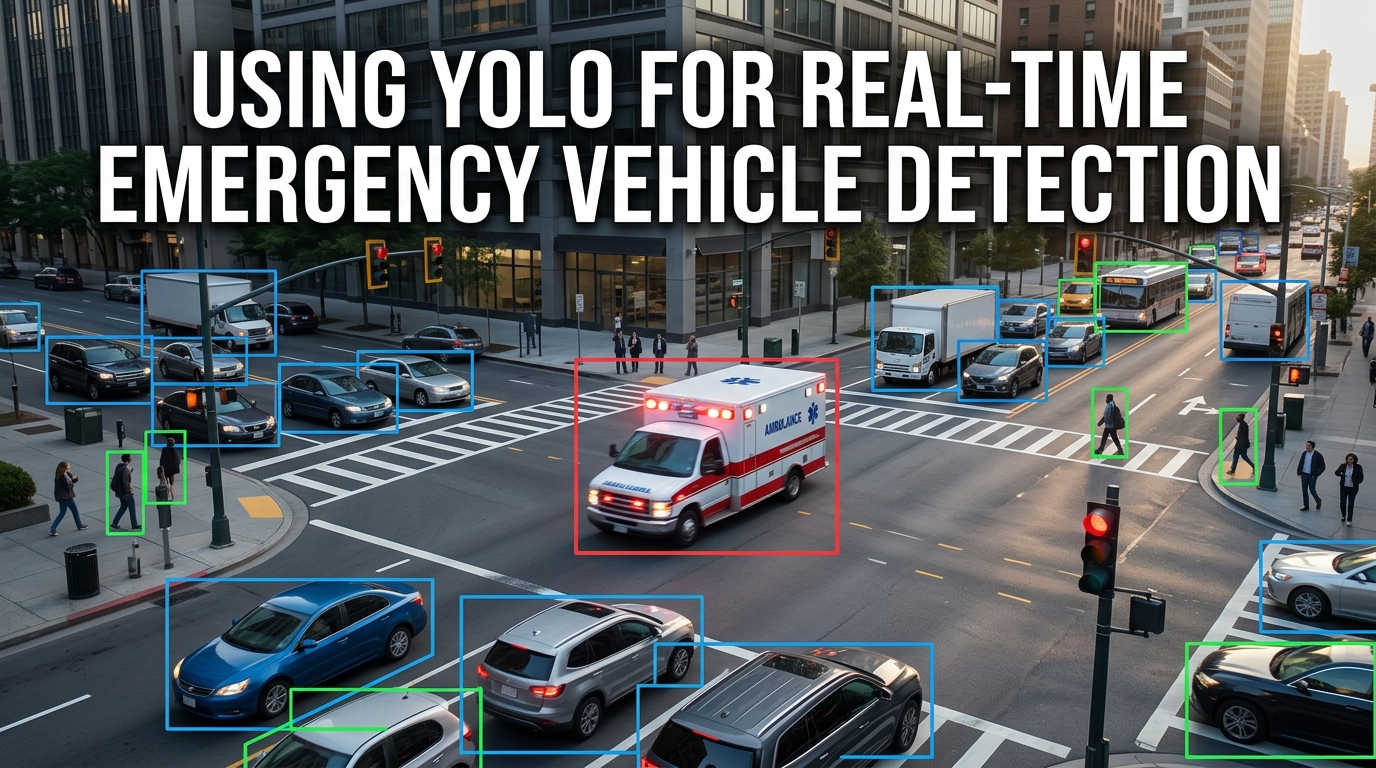

Computer vision is rapidly transforming intelligent transportation systems. Among modern object detection frameworks, YOLO ("You Only Look Once") has emerged as one of the most effective architectures for real-time detection tasks.

Unlike traditional image-processing pipelines, YOLO performs object localization and classification simultaneously within a single neural network pass. This enables extremely fast inference while maintaining strong detection accuracy.

According to Ultralytics YOLO Documentation, YOLO architectures are specifically optimized for real-time computer vision applications requiring low latency and high throughput.

At Greenwave TechLabs, our emergency traffic preemption system uses YOLO-based vision intelligence as part of the multi-modal detection framework. The Visual Guard subsystem continuously analyzes live traffic camera feeds to identify ambulances, fire trucks, emergency responders, and traffic movement patterns.

The Detection Workflow

- Capturing real-time video frames

- Resizing and preprocessing frames

- Running YOLO inference

- Applying confidence filtering

- Generating emergency detection signals

Why YOLOv5s

We selected YOLOv5s because it provides a strong balance between inference speed, edge deployment capability, low computational overhead, and real-time detection accuracy.

- 0.895 mAP visual detection performance

- 0.92 ambulance precision

- Approximately 58ms visual inference latency

This level of performance enables intersections to identify approaching emergency vehicles almost instantly.

Adaptability Over Rule-Based Systems

Unlike older rule-based computer vision systems, deep learning models such as YOLO can adapt to varying lighting conditions, partial vehicle occlusion, urban traffic complexity, and dynamic road environments.

In our framework, visual detection serves as one layer within a larger redundant decision architecture. The system combines YOLO-based vision, acoustic siren recognition, and secure LoRa V2I communication. This layered design improves reliability during real-world deployment.

The Broader Picture

Real-time vision systems are becoming increasingly important for autonomous mobility, adaptive intersections, intelligent surveillance, smart city infrastructure, and emergency response coordination. As urban systems evolve, cameras are no longer passive monitoring devices — they are becoming intelligent sensors capable of understanding and reacting to their environment in real time.